January bandwidth warning -> cause of usage found??

January bandwidth warning -> cause of usage found??

Hi all,

Unfortunately, bandwidth warning messages have started to turn up in my inbox.

With about ~28 hours to go, I hope the site won't get knocked off the web.

If it does, you know why!

Neil.

Unfortunately, bandwidth warning messages have started to turn up in my inbox.

With about ~28 hours to go, I hope the site won't get knocked off the web.

If it does, you know why!

Neil.

Last edited by Neil on Wed Feb 01, 2006 12:57 am, edited 2 times in total.

-

Halifax--lad

- Established Member

- Posts: 227

- Joined: Sat Sep 10, 2005 11:27 pm

Is there no way you can get some of the functions of your server to work off other mirrors

so at least if yours vanishes for a bit the other servers can carry on working or is that not possible.

How much would you have to spend extra each month to up your bandwidth, maybe you should move the paypal donation link to the top of your webpages it may attract people to it a bit more.

so at least if yours vanishes for a bit the other servers can carry on working or is that not possible.

How much would you have to spend extra each month to up your bandwidth, maybe you should move the paypal donation link to the top of your webpages it may attract people to it a bit more.

Hi,

I recon the site will be ok this month.

There is no way at the moment to share the data out amongst the mirrors, as my MySQL databases are quite large due to all the BOINC accounts that are stored. Last time I checked the Seti database was about 20MB!

Out of all my web host's packages, I'm on the most expensive and feature packed one, merely for the bandwidth (20GB), which obviously will soon not be enough.

As I hardly use the disk space provided, I'll see if I can broker a deal. I did find an ideal UK web host with unlimited bandwidth and cheaper prices, but unfortunately, they do not offer ssh access! D'oh.

Rest assured, only the boinc subdomain should be affected by the bandwidth restrictions, as I have reserved a small amount for my other subdomains as well as the TLD, so this forum should still be up!

I will do my best to keep the site online - but remember, it's a free service!

Neil.

I recon the site will be ok this month.

There is no way at the moment to share the data out amongst the mirrors, as my MySQL databases are quite large due to all the BOINC accounts that are stored. Last time I checked the Seti database was about 20MB!

Out of all my web host's packages, I'm on the most expensive and feature packed one, merely for the bandwidth (20GB), which obviously will soon not be enough.

As I hardly use the disk space provided, I'll see if I can broker a deal. I did find an ideal UK web host with unlimited bandwidth and cheaper prices, but unfortunately, they do not offer ssh access! D'oh.

Rest assured, only the boinc subdomain should be affected by the bandwidth restrictions, as I have reserved a small amount for my other subdomains as well as the TLD, so this forum should still be up!

I will do my best to keep the site online - but remember, it's a free service!

Neil.

-

Halifax--lad

- Established Member

- Posts: 227

- Joined: Sat Sep 10, 2005 11:27 pm

Hi again,

I think I may have found a bigger cause of my bandwidth woes...

Although the site gets 3 - 4 million hits a month, the vast majority are to the graphics generation scripts, which at the moment pass the full burden of generating the images to the mirrors, and therefore, only a header redirect needs to be issued.

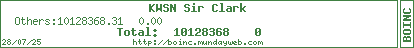

Whilst hunting through my stats logs to see what was going on, I found this:

Has you can see, the GoogleBot is taking a fair junk of my bandwidth!!!

I have done some research and have put a robots.txt file in place to stop the bot from indexing certain pages on my site. In particular, I think it may have been going mad when indexing http://boinc.mundayweb.com/stats/ due to all the possible links that can be generated from these pages.

If I can reduce the 8GB GoogleBot usage down to less than a GB, it would certainly help!

Watch this space!

Neil.

I think I may have found a bigger cause of my bandwidth woes...

Although the site gets 3 - 4 million hits a month, the vast majority are to the graphics generation scripts, which at the moment pass the full burden of generating the images to the mirrors, and therefore, only a header redirect needs to be issued.

Whilst hunting through my stats logs to see what was going on, I found this:

Has you can see, the GoogleBot is taking a fair junk of my bandwidth!!!

I have done some research and have put a robots.txt file in place to stop the bot from indexing certain pages on my site. In particular, I think it may have been going mad when indexing http://boinc.mundayweb.com/stats/ due to all the possible links that can be generated from these pages.

If I can reduce the 8GB GoogleBot usage down to less than a GB, it would certainly help!

Watch this space!

Neil.

I discovered Google was over-enthusiastic on my website......I've got lots of photos so it went to each page.

Not got as much as you but I was monitoring to see how many people visited my site and it screwed my stats up.

Google's useful when you want to find something, just not when it's wants something to find.

Who knows, you may even be able to downgrade to a lower package......

Glad you found the cause. 8GB a month, that's a lot.

Where are you based and who are you with, hosting wise???

I've found one that is 50GB bandwidth with SSH access for £11.99 per month (usually £14.99 - it's on promotion)

It's the 1&1 Business Pro package (http://www.1and1.co.uk)

Been with them a few months, no complaints so far.

Not got as much as you but I was monitoring to see how many people visited my site and it screwed my stats up.

Google's useful when you want to find something, just not when it's wants something to find.

Who knows, you may even be able to downgrade to a lower package......

Glad you found the cause. 8GB a month, that's a lot.

Where are you based and who are you with, hosting wise???

I've found one that is 50GB bandwidth with SSH access for £11.99 per month (usually £14.99 - it's on promotion)

It's the 1&1 Business Pro package (http://www.1and1.co.uk)

Been with them a few months, no complaints so far.

Cruncher: i7-8700K (6 cores, 12 threads), 16GB DDR4-3000Mhz, 8GB NVIDIA 1080

-

Lee Carre

- Member

- Posts: 17

- Joined: Tue Jan 31, 2006 1:34 am

- Location: Jersey, Channel Islands, Great Britain (UK)

using robots.txt is a good start, and i'm glad to see it's only in the root, it won't work anywhere else

enabling caching will make a BIG difference, and not only to google (it helps google index your content faster too), it'll help with all your users as well, it'll make everything seem faster, and reduce your bandwidth a LOT!

there's a few threads at webmasterworld.com

I made a thread about caching over at SETI, plenty of into about it there, and a link to a highly recommended guide as well

webmaster world have some good info too: Are you using If Modified Since?

google (not sure about others) and any established browsers (including RSS readers like SharpReader use conditional GET requests (sending "if-modified-since" in the request header) so if a page hasn't changed (like old news archives for example) then your server (should) only send the headers back with a "HTTP 304 Not Modified"

which takes almost nothing in the way of bandwidth and resources, apache can handle hundreds of these a second without a problem

for all your static content, it's just a case of enabling the server to send the correct headers (with a bit of configuration), who's your host and which package are your using? i'll have a look at the features and see if you can do this from their control panel (try looking for something about "HTTP headers" unless there's a specific "caching" section) if not i'm sure you could ask an admin to do it, as you must be a good customer of their's

i'm mention some tips specifically for things like images (which will be most of your bandwidth), i suggest putting all imgages in an /images/ folder (in the root, or somewhere appropriate) and setting full caching headers (including cache-control) for it (and allowing all child objects to inherit settings, thus all your images will have the same settings) and set the cache expiration at a week (or something long, prehaps a month maybe?)

if cache-control is used the client won't even check to see if it's change for the specified amount of time (an hour for documents? even longer for archives, or anything that's never gonna change) but it's not recommende for frequently changing content, or content for which the time it'll change is unknown (like a new page/feed) because you don't want it cached when it's been updated, you want the client to always check using if-modified-since

as for PHP, if you're using any, i'm guessing you must be, it'll have to be done internally, but there are plenty of guides and tutorials out there to learn how, if it's hard to do, especially for highly dynamic content, and you have the bandwidth to spare (after the other changes) then i wouldn't bother, but for simple PHP it would probably be worth the effort, once you've got it working you'll notice even more of a difference in addition to the reduction the other changes will make

[edit]

something to help caching in general, if linking to your home page for example, use href="/" and not href="/index.html" or href="index.html"

because as far as a client is concerned, / and /index.html could be different documents, so they will not be treated as the same thing

so all your links need to be the same (this also goes for any other pages ie use href="/stats/" and not href="/stats/index.html")

[/edit]

i'm guessing your using valid markup and external CSS stylesheets as much as possible?

that'll help to reduce the amount of "bloat code" being sent

[edit]run it thru the (X)HTML validator and CSS Validator to check, good efficient, lean code will help a lot too, using strict DTDs rather than transitional[/edit]

[edit]

as for other usefuls tools and validators, there's one for robots.txt files, yours is currently valid, which is good, but the link is for future reference if you ever change it

FEED Validator is great for Atom and RSS feeds, unfortunetly your feed doesn't validate (at time of writing), you need to use ISO dates (eg - Tue, 31 Jan 2006 01:30:00 GMT - but you can change the timezone used), and if you want to use HTML in the description, you need to enter it as CDATA

also using <pubDate>ISO Date/Time</pubDate> for each item is a good idea too, lets readers not have to check a feed as often to get acurate times for when items were published, beacuse <pubDate></pubDate> specifies exactly when it was posted (i for one, make a point of checking feeds without <pubDate> every 15 mins, but only checking ones that do use <pubDate> every few hours)

you should also be serving an rss feed as application/rss+xml, or at least application/xml, not just text/xml

even if you can't change the MIME/content-type used you should defenetly specify application/rss+xml as the type in your <link> tags, so that readers which are only capable of certain formats know which format you're using (because Atom is XML too, so text/xml could be either)

using caching will help greatly with the feed anyway

[/edit]

[edit]

to see the headers your server sends, there's a great web based tool that i've found extremely useful

[/edit]

hope all this helps and gives you plenty to read and get started with

any questions, please, don't hesitate to ask, if you want to contact me personally, my email is on my SETI profile (easy to find, as i'm the author of the caching thread on SETI, mentioned earlier)

enabling caching will make a BIG difference, and not only to google (it helps google index your content faster too), it'll help with all your users as well, it'll make everything seem faster, and reduce your bandwidth a LOT!

there's a few threads at webmasterworld.com

I made a thread about caching over at SETI, plenty of into about it there, and a link to a highly recommended guide as well

webmaster world have some good info too: Are you using If Modified Since?

google (not sure about others) and any established browsers (including RSS readers like SharpReader use conditional GET requests (sending "if-modified-since" in the request header) so if a page hasn't changed (like old news archives for example) then your server (should) only send the headers back with a "HTTP 304 Not Modified"

which takes almost nothing in the way of bandwidth and resources, apache can handle hundreds of these a second without a problem

for all your static content, it's just a case of enabling the server to send the correct headers (with a bit of configuration), who's your host and which package are your using? i'll have a look at the features and see if you can do this from their control panel (try looking for something about "HTTP headers" unless there's a specific "caching" section) if not i'm sure you could ask an admin to do it, as you must be a good customer of their's

i'm mention some tips specifically for things like images (which will be most of your bandwidth), i suggest putting all imgages in an /images/ folder (in the root, or somewhere appropriate) and setting full caching headers (including cache-control) for it (and allowing all child objects to inherit settings, thus all your images will have the same settings) and set the cache expiration at a week (or something long, prehaps a month maybe?)

if cache-control is used the client won't even check to see if it's change for the specified amount of time (an hour for documents? even longer for archives, or anything that's never gonna change) but it's not recommende for frequently changing content, or content for which the time it'll change is unknown (like a new page/feed) because you don't want it cached when it's been updated, you want the client to always check using if-modified-since

as for PHP, if you're using any, i'm guessing you must be, it'll have to be done internally, but there are plenty of guides and tutorials out there to learn how, if it's hard to do, especially for highly dynamic content, and you have the bandwidth to spare (after the other changes) then i wouldn't bother, but for simple PHP it would probably be worth the effort, once you've got it working you'll notice even more of a difference in addition to the reduction the other changes will make

[edit]

something to help caching in general, if linking to your home page for example, use href="/" and not href="/index.html" or href="index.html"

because as far as a client is concerned, / and /index.html could be different documents, so they will not be treated as the same thing

so all your links need to be the same (this also goes for any other pages ie use href="/stats/" and not href="/stats/index.html")

[/edit]

i'm guessing your using valid markup and external CSS stylesheets as much as possible?

that'll help to reduce the amount of "bloat code" being sent

[edit]run it thru the (X)HTML validator and CSS Validator to check, good efficient, lean code will help a lot too, using strict DTDs rather than transitional[/edit]

[edit]

as for other usefuls tools and validators, there's one for robots.txt files, yours is currently valid, which is good, but the link is for future reference if you ever change it

FEED Validator is great for Atom and RSS feeds, unfortunetly your feed doesn't validate (at time of writing), you need to use ISO dates (eg - Tue, 31 Jan 2006 01:30:00 GMT - but you can change the timezone used), and if you want to use HTML in the description, you need to enter it as CDATA

Code: Select all

<description>

<![CDATA[

HTML content

]]></description>

you should also be serving an rss feed as application/rss+xml, or at least application/xml, not just text/xml

even if you can't change the MIME/content-type used you should defenetly specify application/rss+xml as the type in your <link> tags, so that readers which are only capable of certain formats know which format you're using (because Atom is XML too, so text/xml could be either)

using caching will help greatly with the feed anyway

[/edit]

[edit]

to see the headers your server sends, there's a great web based tool that i've found extremely useful

[/edit]

hope all this helps and gives you plenty to read and get started with

any questions, please, don't hesitate to ask, if you want to contact me personally, my email is on my SETI profile (easy to find, as i'm the author of the caching thread on SETI, mentioned earlier)

Last edited by Lee Carre on Wed Mar 15, 2006 3:52 am, edited 2 times in total.

Want to search the BOINC Wiki, BOINCstats, or various BOINC forums from within firefox? Try the BOINC related Firefox Search Plugins

Hi,

Lee -> Thanks for the detailed info! The RSS validator is certainly handy - I'll update the feed script in a mo...

ClarkF1 -> I can PM you the name of my host if you like? I don't want to post it here, just in case some script kiddie decides to take out my site and others hosted by my provider.

Cheers,

Neil.

Lee -> Thanks for the detailed info! The RSS validator is certainly handy - I'll update the feed script in a mo...

ClarkF1 -> I can PM you the name of my host if you like? I don't want to post it here, just in case some script kiddie decides to take out my site and others hosted by my provider.

Cheers,

Neil.

-

Lee Carre

- Member

- Posts: 17

- Joined: Tue Jan 31, 2006 1:34 am

- Location: Jersey, Channel Islands, Great Britain (UK)

i'm guessing my example wasn't too clear, sorry about thatNeil wrote:Lee -> I've just updated the RSS feed, but the CDATA stuff doesn't seem to work for items that have anchor tags in them.

Any ideas?

for each description element, you obviously need a <description> opening tag, but you need to wrap HTML with CDATA container

to open the CDATA, use <![CDATA[

then to close the CDATA, use ]]>

then have your closing </description> tag

so we get:

Code: Select all

<description><![CDATA[

enter your (HTML) description content here, what you'd normally put between the description tags

]]></description>

you've currently got

Code: Select all

<description><![CDATA[HTML content]]>

description content

</description>

so you need to have:

Code: Select all

<description><![CDATA[then:

Code: Select all

]]></description>hope that's a better attempt at an explaination

Last edited by Lee Carre on Wed Mar 15, 2006 3:52 am, edited 1 time in total.

Want to search the BOINC Wiki, BOINCstats, or various BOINC forums from within firefox? Try the BOINC related Firefox Search Plugins

-

Halifax--lad

- Established Member

- Posts: 227

- Joined: Sat Sep 10, 2005 11:27 pm

I was looking at this provider the other day http://unitedhosting.co.uk/ , servers in the UK & USA seems pretty cheap to me with the option to increase bandwidth if you go over the 20Gb limit they have in place. £1 per Gb after limit is reached

Seem to have all the requirements you need too

Seem to have all the requirements you need too

Hi all,

Thanks for the hosting info. I'll see how the robots.txt file affects the GoogleBot for Feb before deciding whether to move hosts.

Lee -> I feel that we're almost there! The CDATA stuff seems to work, but in FireFox (at least), it doesn't render the anchor tags (i.e. make clickable links).

Is there anyway to do this?

Thanks for your help,

Neil.

Thanks for the hosting info. I'll see how the robots.txt file affects the GoogleBot for Feb before deciding whether to move hosts.

Lee -> I feel that we're almost there! The CDATA stuff seems to work, but in FireFox (at least), it doesn't render the anchor tags (i.e. make clickable links).

Is there anyway to do this?

Thanks for your help,

Neil.

-

Lee Carre

- Member

- Posts: 17

- Joined: Tue Jan 31, 2006 1:34 am

- Location: Jersey, Channel Islands, Great Britain (UK)

my initial reaction is to ask why you'd want this? obviously it'd be nice, but a feed is ment to be read by a reader (hence serving it with a suggested MIME type of "application/xml" (well actually "application/rss+xml") meaning that it needs to be processed by an application before being presented to the user, it's not just plain text otherwise it would be "text/xml")Neil wrote:Lee -> I feel that we're almost there! The CDATA stuff seems to work, but in FireFox (at least), it doesn't render the anchor tags (i.e. make clickable links).

Is there anyway to do this?

Thanks for your help

the news section on your home page is for regular browser use, the feed is just another means to get that same news, it's not ment to replace it

the raw data in a feed isn't ment to be seen by users, it's for feed readers to parse, then display to users

a web browser is needed to render (X)HTML documents, and a feed reader is needed to render RSS/Atom feeds, the two are seperate things, a feed isn't ment to be a web page

it basically comes down to a choice of which format a user perfers

however, i'll look into this

Last edited by Lee Carre on Wed Mar 15, 2006 3:53 am, edited 1 time in total.

Want to search the BOINC Wiki, BOINCstats, or various BOINC forums from within firefox? Try the BOINC related Firefox Search Plugins